Blink vs. Think

When to Trust Your Gut and When to Override It

Gladwell said trust your instincts. Kahneman said distrust them. They were both right, and understanding why is one of the most practical things you can do for your decision-making.

I read Blink sometime in the mid-2000s and finished it feeling unusually confident. Malcolm Gladwell had just spent 250 pages explaining that my instincts were more sophisticated than I had given them credit for, that the unconscious mind was processing signals I couldn’t consciously articulate, and that some of my best decisions might be the fastest ones. I was a fan.

Then I read Daniel Kahneman’s Thinking, Fast and Slow, a few years later, and felt the floor shift. Here was a Nobel laureate with decades of rigorous experimental data explaining, in precise and somewhat uncomfortable detail, that fast thinking is riddled with systematic errors, that human intuition fails in predictable and measurable ways, and that the confidence we feel in our gut judgments is one of the least reliable signals available to us. I was equally convinced.

For a while, I held both books in my head as a kind of unresolved argument. Trust your gut. No, audit your gut. Blink. Think. The contradiction sat there, nagging, until I finally read the academic literature underneath carefully enough to see what was happening. Gladwell and Kahneman are not contradicting each other. They are describing the same cognitive mechanism operating in different conditions – and the conditions are everything.

This piece is about what I figured out, how I turned it into a practical framework for my own decision-making, and why I think the AI moment we are living through makes this synthesis more important than ever.

Two Books, One Argument — Or So It Seems

Start with Gladwell. Blink is built around the concept of “thin-slicing” – the ability of the unconscious mind to extract meaningful patterns from small slices of experience. Gladwell’s examples are vivid and deliberately surprising: a Getty Museum curator who felt something was wrong with a supposedly ancient Greek statue within seconds of seeing it, before any analysis confirmed the forgery. A couple’s therapist who could predict with high accuracy whether a marriage would survive after watching a few minutes of conversation. A tennis coach who could call a double fault before the ball left the server’s racket. The message: your unconscious is smarter than you think. Get out of its way.

Then there is Kahneman. In Thinking, Fast and Slow, he divides cognition into two systems. System 1 is fast, automatic, emotional, and operates below conscious awareness. System 2 is slow, deliberate, effortful, and logical. The book is a catalog of the ways System 1 misleads us – anchoring, availability bias, narrative fallacy, the halo effect, overconfidence, loss aversion amplified beyond rationality. His research, much of it conducted with his longtime collaborator Amos Tversky, demonstrated repeatedly that people make systematic, predictable errors when relying on intuition. The message: slow down. The fast brain is dangerous.

Read back-to-back, the books feel like a debate. They are actually a map. What neither author fully flags for the general reader is that they are each describing a different territory.

The Variable They Are Both Missing — Domain Validity

The key concept is what researchers call “environment validity” – the degree to which a domain provides reliable, rapid feedback and contains genuinely recurring patterns from which expertise can be built.

In a high-validity environment, patterns repeat, the feedback loop is fast and honest, and expertise accumulates in ways that genuinely improve performance. Chess is the classic example. Emergency medicine, for experienced practitioners recognizing specific presentations, is another. A seasoned firefighter reading the behavior of a fire. A sommelier identifying a vintage blind. These are Gladwell’s examples. His thin-slicers have done the reps in environments where the reps actually teach you something real.

In a low-validity environment, the signal-to-noise ratio is poor, feedback is delayed or absent, and the patterns that seem to repeat are often illusory. Long-range political and economic forecasting. Stock picking. Predicting which startup will succeed. Clinical psychology predictions about future behavior. These are the domains where Kahneman’s research concentrated its fire. And the data is brutal: in these environments, simple statistical models consistently outperform expert intuition, and experienced practitioners often perform no better than novices.

Gladwell’s error was in not recognizing that his examples were drawn almost exclusively from high-validity environments and in presenting thin-slicing as a general-purpose tool. Kahneman’s work is more rigorous about this distinction, but even Thinking, Fast and Slow does not make it as central or as actionable as it deserves to be. The clearest articulation lives in a 2009 academic paper that almost no one outside the field has read.

“Conditions for Intuitive Expertise: A Failure to Disagree”

Daniel Kahneman & Gary Klein — American Psychologist, 2009

This paper is the intellectual keystone that neither Blink nor Thinking, Fast and Slow makes fully explicit. Kahneman, the skeptic of intuition, and Klein, a psychologist who spent his career studying expert intuition in high-stakes domains like firefighting and military command, sat down to find out where they actually disagreed. The answer: less than either expected.

Their joint conclusion was that intuitive expertise is real and trustworthy – but only when two conditions are both present: (1) the environment must be sufficiently regular and predictable to allow skill to be encoded through experience, and (2) the practitioner must have had adequate opportunity to learn those regularities through prolonged practice with honest feedback. When both conditions are met, blink. When either is absent, think. The paper is available through the American Psychological Association and is worth the read for anyone serious about decision quality.

The Kahneman-Klein framework cuts through the apparent contradiction between the two books with surgical precision. The question is never whether to trust intuition. The question is whether you have earned the right to trust it in this specific domain, under these specific conditions.

The Danger Zone — Where It Gets Expensive

The most dangerous place in the Kahneman-Klein framework is not the clear high-validity zone (where your gut is probably calibrated) or the clear low-validity zone (where everyone knows to be careful). It is the middle – the domains that feel like high-validity environments because you have years of experience in them, but that are low-validity because the feedback loop was broken, misleading, or operating under conditions that no longer apply.

Investing is the most expensive example I know. A fund manager who outperformed for a decade in a falling-rate, liquidity-abundant environment has logged thousands of hours of apparent expertise. But how much of what they learned was genuinely about security selection and capital allocation – and how much was a persistent tailwind that felt like skill? The feedback loop rewarded them. It just was not teaching them what they thought it was teaching them.

DALBAR’s annual Quantitative Analysis of Investor Behavior has tracked this gap for thirty years. The average equity investor consistently underperforms the index they are invested in – not by a little, but by roughly 1.5 to 2 percentage points annually over long periods. The culprit is not a lack of information. It is behavioral: investors buy high on momentum and sell low on fear, guided by gut reads that feel expert but are executing at exactly the wrong moments. The intuition is fast. The environment in which it was calibrated does not reliably repeat.

“Whether professionals have a chance to develop intuitive expertise depends essentially on the quality and speed of feedback, as well as sufficient opportunity to practice.”

— Daniel Kahneman & Gary Klein, American Psychologist, 2009

The same trap shows up in executive decision-making. A leader who built a successful business in one market regime develops strong intuitions about what works. Those intuitions feel like wisdom. They may be wisdom for the environment that produced them. When the environment shifts, the intuitions become a liability that is nearly impossible to detect from the inside, because the feeling of knowing and the feeling of being wrong are neurologically identical.

This is precisely why AI raises the stakes. AI tools can now perform many of the pattern-recognition functions that intuition has historically handled. But they can also generate authoritative-sounding analysis that reinforces whatever the human operator already believed, at speed, dressed in the language of rigor. A mis-calibrated expert with a powerful AI is not a corrected expert. They are a faster, more articulate version of the same mis-calibration.

What AI Changes About This Calculus

Here is the uncomfortable implication for anyone building an investment thesis, a business strategy, or a hiring process in 2026: the domains where human intuition is most trustworthy are increasingly the domains where AI is becoming competitive. High-validity, pattern-rich, feedback-dense environments are exactly the kind of environments where machine learning excels. Radiology. Fraud detection. Quantitative trading signals. Quality control in manufacturing. If you have spent years developing expert intuition in one of these domains, part of that edge is being systematically transferred to algorithms that do not sleep, do not anchor, and do not experience loss aversion.

The domains where human judgment retains the most durable and compounding advantage are the low-validity, high-ambiguity spaces that Kahneman’s work illuminates. Complex, novel, multi-variable decisions with delayed feedback and no clean historical analog. Evaluating a founder’s character and resilience. Deciding whether a market is structurally different this time or cyclically the same. Navigating a business through a genuine discontinuity. These are not tasks AI handles well – yet. And they are not tasks where raw intuition handles them well either, which is exactly Kahneman’s point.

What handles them well is disciplined, structured, self-aware reasoning – System 2 applied deliberately, with the humility to acknowledge which kind of environment you are actually in. That is the edge that compounds. That is what the framework below is designed to build.

The Buffington Framework — When to Blink, When to Think

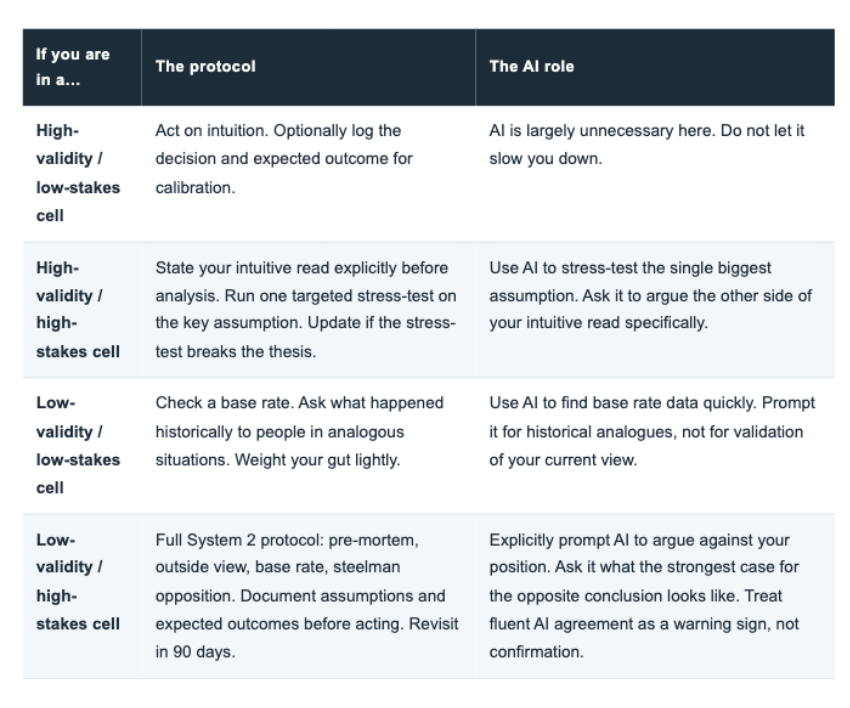

The framework operates in two stages. Stage one is the environment audit – a set of diagnostic questions designed to identify which kind of decision environment you are in, before you decide how much weight to give your gut. Stage two is the decision protocol – a different set of tools depending on what stage one reveals.

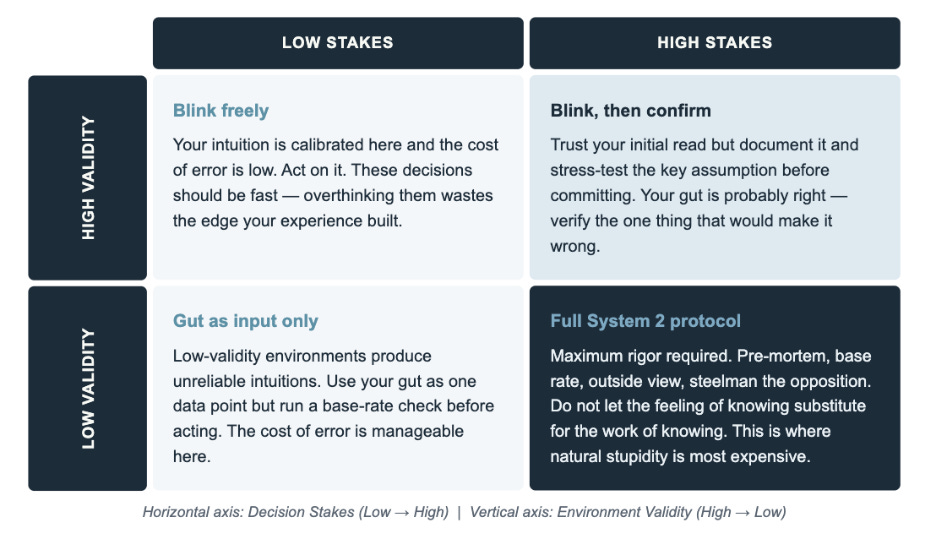

The 2×2 matrix below maps the intersection of environment validity and decision stakes, which together determine the appropriate level of System 2 intervention.

The Buffington Decision Matrix — Environment Validity vs. Decision Stakes

Stage One — The Environment Audit

Run these four questions before every significant decision. The answers tell you which cell of the matrix you are in.

1. How many times have I seen this exact pattern, in conditions that genuinely resemble today?

Not just “I have been doing this for twenty years.” The reps have to match the current environment. A career built on falling rates does not count as experience in rising ones.

2. How quickly and clearly did I receive feedback the last time I made this kind of call?

Fast, honest feedback is what calibrates intuition. If the feedback loop was slow, noisy, or if you never systematically tracked outcomes, your gut is flying on dead reckoning.

3. Can I articulate the causal mechanism behind my intuition, or am I matching a pattern I cannot fully explain?

Gladwell’s thin-slicers could not always explain their reads, but they were operating in genuinely high-validity environments. If you cannot explain the mechanism AND the validity is questionable, that is a red flag.

4. Do the best performers in this domain consistently beat chance over long periods, or does the research show expert and novice predictions converge?

This is the Kahneman-Klein validity test applied empirically. For stock picking, the research is clear: most active managers do not beat the index after fees over long periods. For specialist investors with a genuine informational edge in a narrow domain, the picture is different. Know which one you are.

Once you have audited the environment, Stage Two applies a different decision protocol depending on where you land.

The Synthesis in Plain Terms

Gladwell was right about experts operating in the right environments. Kahneman was right about what happens when people mistake the feeling of expertise for the reality of it. The Kahneman-Klein paper showed that both men were essentially describing the same underlying truth from different vantage points: the quality of intuition is a function of the quality of the environment that produced it.

What this means practically is that the work of good decision-making is not choosing between fast thinking and slow thinking. It is developing the meta-skill of knowing which one the situation calls for — and then having the discipline to use it. Philip Tetlock’s super forecaster research directly reinforces this. The forecasters who consistently outperformed were not the ones with the strongest priors or the most confident instincts. They were the ones who were most accurate about the nature of their own uncertainty – who knew which questions were forecastable and which were not, and calibrated their confidence accordingly.

“The world makes much more sense to System 1 than it actually is. This is because System 1 suppresses doubt and ambiguity – and it is very good at constructing the best possible story from the evidence available.”

— Daniel Kahneman, Thinking, Fast and Slow

That meta-skill is what I am calling the stacked edge in the context of decision-making. Not faster thinking. Not more deliberate thinking. The right kind of thinking for the environment you are actually in. That distinction compresses years of expensive trial and error into a question you can ask yourself in sixty seconds, before you commit.

And here is the practical kicker for the AI era: the environment audit itself – the four questions above – is something you can now run in collaboration with an AI tool in minutes. Ask it to challenge your assessment of domain validity. Ask it what the research says about expert performance in your specific area. Ask it for the base rate before you tell it your thesis. Used this way, AI becomes a System 2 assistant rather than a System 1 accelerant. That distinction is worth a great deal.

A Challenge to Take with You

Here is what I would ask you to do with this framework. Pick one domain in your professional life where you feel like an expert – where decisions feel fast, confident, and usually right. Run it through the four diagnostic questions honestly. Not to undermine your confidence, but to calibrate it. Ask whether the feedback loop that built your intuition was fast and honest, or whether it was slow, noisy, and possibly misleading about what was working.

Then pick one high-stakes decision you are facing right now. Identify which cell of the matrix it belongs in. If it lands in the bottom-right – low validity, high stakes – commit to the full System 2 protocol before you finalize your position. Pre-mortem it. Find the base rate. Ask someone you respect to argue the other side. Prompt an AI tool specifically to challenge your thesis rather than support it.

The goal is not to slow down every decision. The goal is to match the speed of your thinking to the validity of the environment. Blink when you have earned it. Think when the environment demands it. Knowing the difference is the whole game.

Questions for You

When you read Blink and Thinking, Fast and Slow, which one felt more true to your own experience – and does the domain validity framework change how you think about that reaction?

Is there a domain in your own work where you suspect your intuition was calibrated for a market regime that no longer exists? What would it take to acknowledge that honestly?

Have you ever run an AI tool through the adversarial protocol — explicitly asking it to argue against your position rather than support it? What happened?

I read every response. Reply directly or find me at markbuffington.substack.com

The sharpest thinking I do comes from conversations that start here.